GPU621/Analyzing False Sharing

Contents

Group Members

Preface

In multicore concurrent programming, if we compare the contention of mutually exclusive locks to "performance killers", then pseudo-sharing is the equivalent of "performance assassins". The difference between a "killer" and an "assassin" is that the killer is visible and we can choose to fight, run, detour, and beg for mercy when we encounter the killer, but the "assassin" is different. The "assassin" is always hiding in the shadows, waiting for an opportunity to give you a fatal blow, which is impossible to prevent. In our concurrent programming, when we encounter lock contention that affects concurrency performance, we can take various measures (such as shortening the critical area, atomic operations, etc.) to improve the performance of the program, but pseudo-sharing is something that we cannot see from the code we write, so we cannot find the problem and cannot solve it. This leads to pseudo-sharing in the "dark", which is a serious drag on concurrency performance, but we can't do anything about it.

What to know before understanding false sharing

Cache Lines

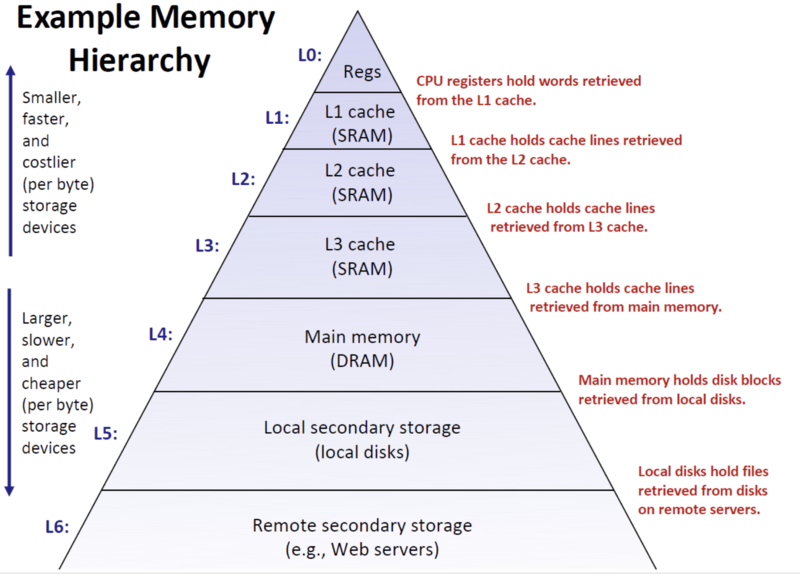

In order to carry out the following discussion, we need to first familiarize ourselves with the concept of cache lines. Students who have studied this part of the OS course on storage architecture should be impressed by the pyramid model of the memory hierarchy, where the pyramid from top to bottom represents a reduction in the cost and larger capacity of the storage medium, and from bottom to top represents an increase in access speed. The top of the pyramid model is located in the CPU registers, followed by the CPU cache (L1, L2, L3), then down to the memory, the bottom is the disk, the operating system uses this storage hierarchy model is mainly to solve the contradiction between the CPU's high speed and memory disk low speed, the CPU will be recently used data read in advance to the Cache, the next time to access the same data, the CPU can be directly from the faster CPU. The next time the same data is accessed, it can be read directly from the faster CPU cache, avoiding slowing down the overall speed by reading from memory or disk.

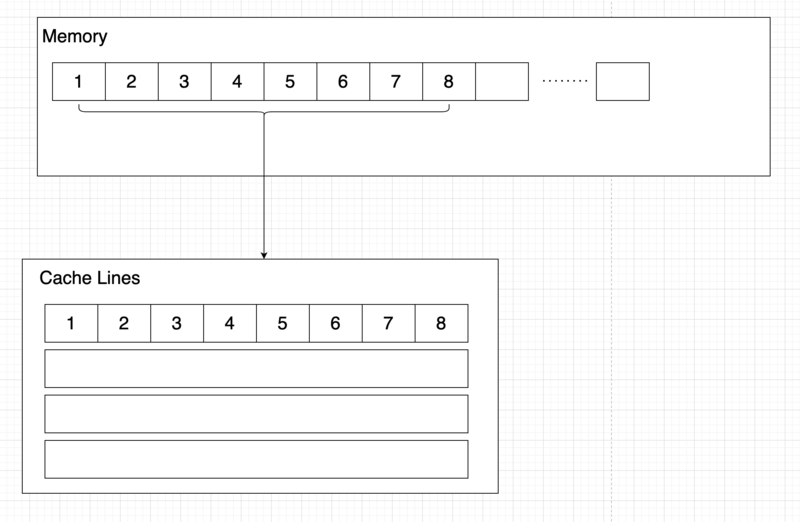

The smallest unit of CPU cache is the cache line, the cache line size varies depending on the architecture, the most common ones are 64Byte and 32Byte, the CPU cache accesses data from within the cache line unit, each time taking the entire cache line where the data needs to be read, even if the adjacent data is not used will also be cached in the CPU cache.

For example, double is 8 bytes in C++ and our cache line is 64 bytes, when we read one of the elements of the double array from memory, the CPU will read the eight elements around that element into the cache line.

Cache Consistency

In the case of single-core CPUs, the above method works fine and ensures that the data cached in the CPU cache is always "clean" because no other CPU will change the data in memory, but in the case of multi-core CPUs, the situation becomes a bit more complicated. In multi-CPU, each CPU has its own private cache (possibly shared L3 cache), and when one CPU1 operates on the cached data in the Cache, if CPU2 has changed the data before, the data in CPU1 is no longer "clean", i.e., it should be invalid. Cache coherency is to ensure that the cache is consistent across multiple CPUs.

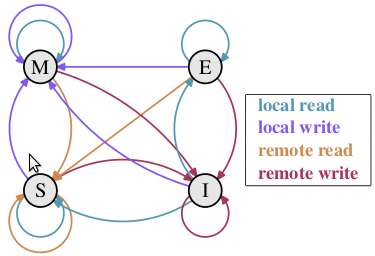

The MESI protocol is used in Linux systems to handle cache coherency, by which is meant the four states of the CPU cache.

- M (Modified): the local processor has modified the cache line, i.e., it is a dirty line, its contents are not the same as those in memory, and there is only one local copy of this cache (proprietary).

- E (Exclusive): the contents of the cache line are the same as in memory and the line is not available to any other processor.

- S (Shared): the contents of the cache line are the same as in memory, and it is possible that a copy of the cache line exists in other processors.

- I (Invalid): The cache line is invalid, and cannot be used.

Each CPU cache line transitions between four states to determine whether the CPU cache is invalidated. For example, if CPU1 performs a write operation on a cache line, this operation will cause the cache line of other CPUs to enter the Invalid state, and the CPU will need to re-read the cache line from memory when it needs to use it. This solves the problem of cache coherency among multiple CPUs.

What is false sharing

What is false-sharing? As we mentioned above, CPU caching is done in cache lines, i.e., in addition to the data it needs to read and write, it will also cache data that is in the same cache line as the data. When the CPU reads the data "d", the CPU will add the eight char data of "abcdefgh" to the CPU cache as a cache line. Suppose the computer has two CPUs: CPU1 and CPU2, CPU1 only reads and writes "a" data frequently, and CPU2 only reads and writes "b" data frequently, it is reasonable to say that these two CPUs do not have any correlation between reading and writing data. However, since the CPU cache is accessed and invalidated by the cache line, even if CPU1 only changes the "a" data in the cache line, it will cause the cache line to be completely invalidated in CPU2, and by the same token, CPU2's change to "b" will also invalidate the cache line. "This causes the cache line to be "ping-ponged" between the two CPUs, and the cache line is invalidated frequently, which eventually leads to a performance degradation of the program, which is false-sharing.

Summary

When a pseudo-share is a real performance bottleneck, it is necessary to try to find and fix it, but in most applications where performance is not as critical, the existence of pseudo-shares is so little harm to the program that it is sometimes not worth the effort and extra memory space (cache line fill) to find pseudo-shares in the system. As I've always said, "Don't over-optimize, don't over-optimize." .