GPU621/GameEngineParallelisation

Contents

Game Engine Parallelisation

Project Overview

Parallelisation in of itself is a difficult task for any application, however game engines are even more difficult to implement proper parallelisation for. Game engines need to run as efficiently as possible on various hardware configurations, and have many different pieces that interact with each other. This wiki article will delve into the basics of parallelising a game engine.

Group Members

- Daniel Kerr

Parallel Structure

Game engines have many systems running all in tandem, and lots of work to do for every frame that needs to be rendered. Old games like Pong and Final Fantasy didn't have a lot going on at once and could comfortably run as a serial application. Modern games, on the other hand, are commonly ran at 60 - 144 frames per second with layers upon layers of systems in tandem. Running the game as efficiently as possible is a must to keep up with these refresh rates, especially when players demand extreme graphical fidelity without reducing frame rates. Before we parallelise any work we need to first break up tasks for either the CPU or the GPU to handle.

The two main ways to do is are: Task Parallelisation, and Data Parallelisation. Task parallelisation is good for doing multiple things to one set of data, like moving objects with collision detection. Data parallelisation is good for doing one thing lots of time to a set of data, like moving lots vertices in a vertex shader.

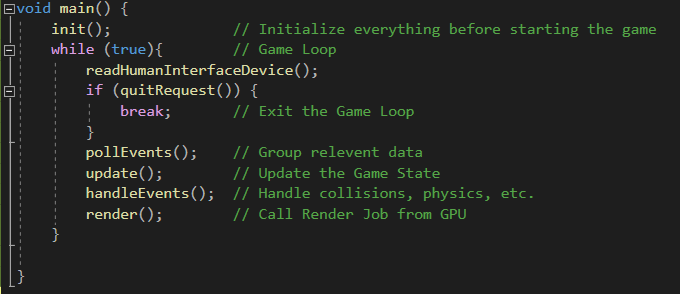

Game Loop

The game loop is the main part of any game, continuously runs, reading Human Interface Devices, updating all of the game systems, handling collision, physics, and everything else, and finally rendering it all to the screen. It is important here to set everything up here in an efficient way that will allow for parallelisation.

Parallelisation Options

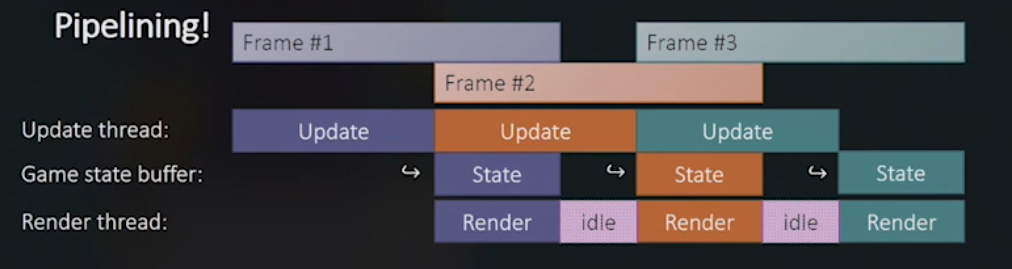

One Thread per Subsystem

The easiest way to start parallelising a game engine is to dedicate threads to certain activities. You can have one thread that exclusively updates the simulation, and another dedicated to rendering. To keep from unsafely sharing data between threads, the update thread can write to a buffer that is passed to the rendering thread after the update thread is done updating. The rendering thread can then render the fixed game state as the game engine continues to update the next frame's data. This was fine for games in the early 2000s, but it wastes a lot of time that could be used to compute something else. This system of parallelisation will require many threads to handle many systems, along with this, it does not scale well with hardware. Having a 16 core CPU won’t make the game run faster, it just leaves more CPU cores idle. If you have too little cores then your operating system is going to have to context switch between these subsystems every frame. Context switching creates a lot of overhead that will hurt the performance of the game. To make use of the idle threads we need a different solution.

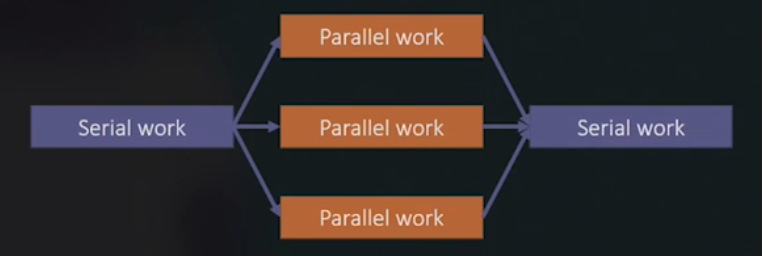

Fork / Join

The next logical step in parallelising the code is to spawn threads as they are needed. Tasks can be offloaded from the main thread as they are needed, and the threads won’t be sitting idle so long as there is work to do. Rendering, inverse kinematics, handling animation states, particle systems, enemy AI, and anything else desired can be easily sent to a thread. The only consideration for what should be multithreaded is that it costs more than the overhead of spawning a thread. Spawning threads is an expensive process, however, and doing it every frame will grind things to a halt. We can vectorize loops, and use a SIMD design, to ease things, but the biggest optimization we can make is using thread pools.

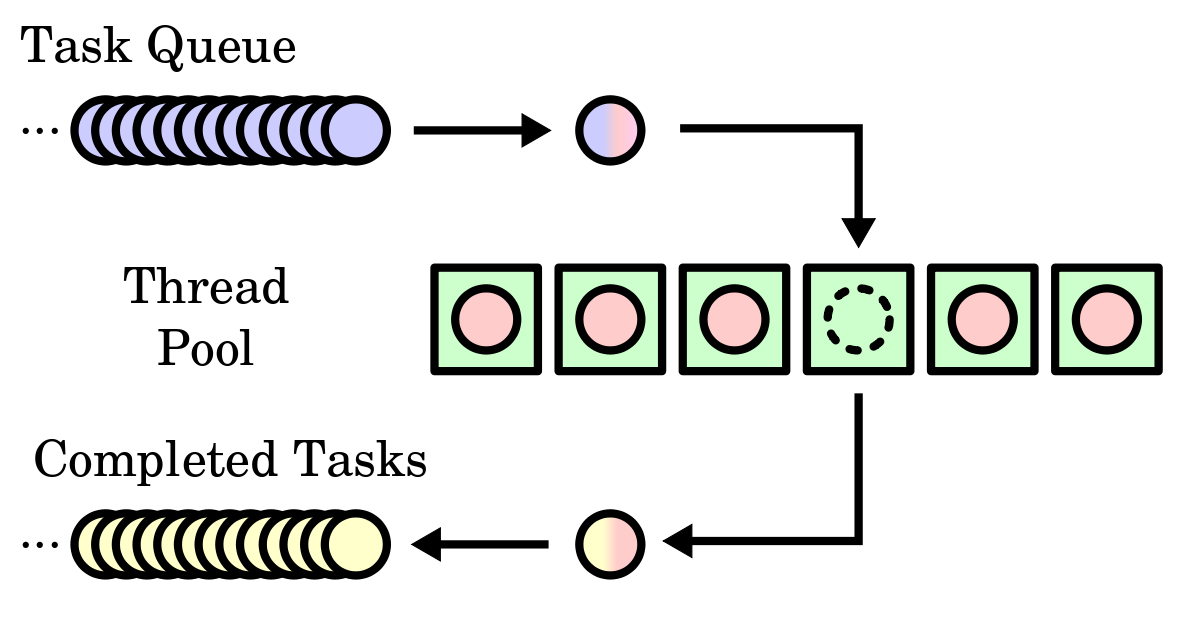

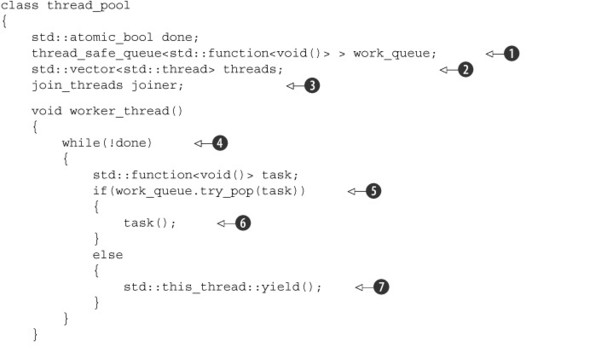

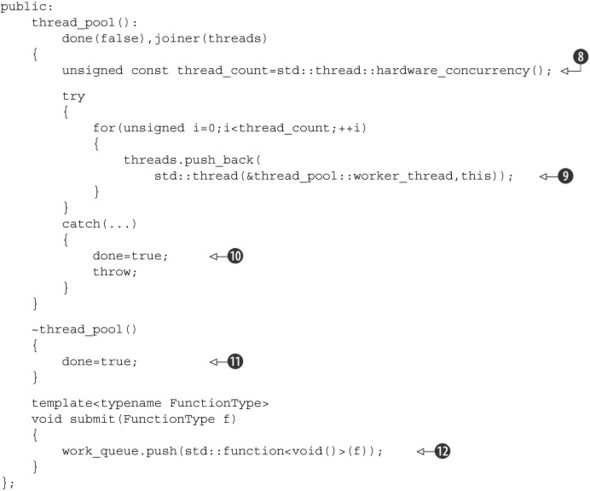

Thread Pools

Thread pools are just a group of threads that are spawned at the start of a program, usually 1 for every CPU core available. Instead of spawning a thread whenever it is needed, instead, tasks are sent to an idle thread in the pool.

(Simple Thread Pool Implementation)

(Simple Thread Pool Implementation)

Job Systems

Setting up a game engine to use thread pools can get difficult to maintain, so another implementation of thread pools was created: Job Systems. Job systems are just an abstraction of thread pools, instead of sending the data to process into the thread pool all jobs to be executed are sent to a queue. The job queue is then scheduled in the game engine, and sent off to a thread pool (or other system) for execution. Job systems are highly customizable as they are arbitrarily fine-grained. Jobs can be defined to do however much is required of them, job sizes in a job system can even be highly different sizes.

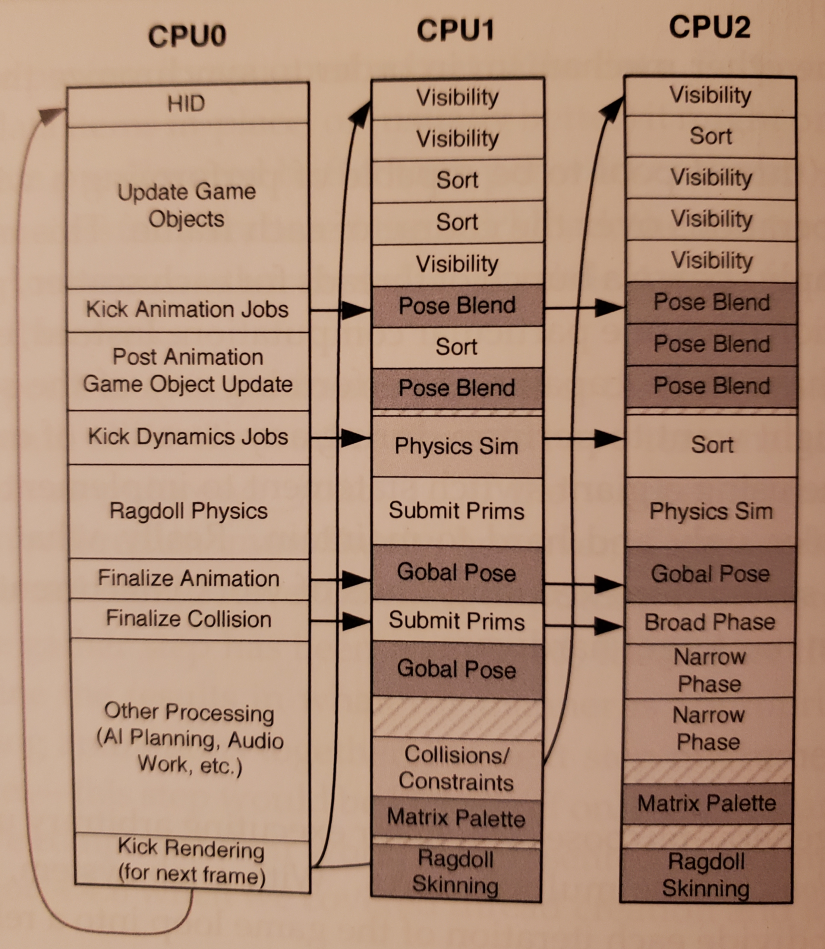

(Job System Game Loop Diagram)

(Job System Game Loop Diagram)

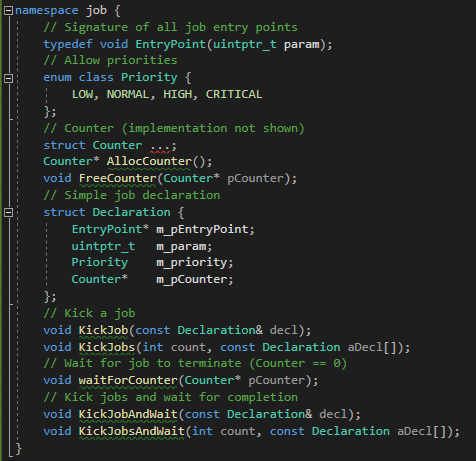

With the entire point of a job system being to simplify the use of thread pools, implementing them as a simple API is not only desirable, it is necessary. A well designed Job System has certain functions that each job needs to be able to do: - Kicking a job (starting it) - Waiting for a job - Sleeping a job (halting execution) - Waking a job (continuing execution of a sleeping job)

Well designed Job Systems also have certain attributes that they must contain:\ - uintptr_t to the jobs themselves - Priority status - Locks

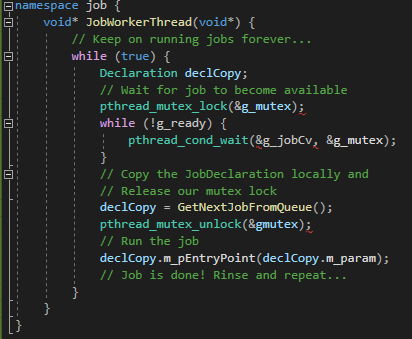

Job With Thread Pool

When working with jobs and a thread pool, a Job Worker would handle each job in the queue, locking it, sending the job to a thread, and unlocking it afterwards. A problem arises, however, when trying to halt the job (sleep & wake).

Job Halting

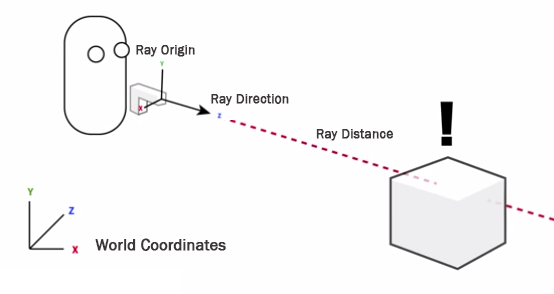

Consider a job for an enemy to fire a raycast at the player, and react according to if it hit. The enemy would have to wait for the encapsulated raycast job to finish executing before continuing. If doing this with thread pools, a full context switch of the thread to the new job would have to take place. This is akin to spawning jobs with the fork / join model, and is a big performance hit. To accommodate this use case, there are 3 common ways to handle jobs other than thread pools.

Jobs as Coroutines

Basing the job system on coroutines instead of thread pools, allows for the explicit calling for one job to yield to another. The running job halts its execution and resumes from where it left off once the new job finishes. This implementation swaps the call stack of the new job with the currently executing job instead of offloading it to another thread, or context switching to the new job.

Jobs as Fibers

Fibers are pretty similar to threads, but with a big difference, fibers are cooperatively scheduled unlike threads which are preemptively scheduled. Preemptively scheduling tasks keeps CPU cores from being stuck on one rogue program. In a game engine, however, if a program is taking up all of the CPU time, that’s a good thing, it means that the CPU core is not sitting idle, being wasted. Implementing fibers for jobs to operate upon is done by running fiber tasks on the CPU threads, and giving the jobs to those fibers. Just like coroutines, the ability to yield one fiber to another fiber is possible by saving the call stack, and switching the call stack of the fiber with the new fiber’s call stack. Fibers are the most widely used way to implement job systems in the game industry.

Job Counters

When implementing job systems with coroutines or fibers, the ability to put jobs to sleep and wake them back up is inherent to the system. With these functionalities, a Job Counter can be implemented to work like a join() function. By using a counter for the amount of jobs running, and having that counter decrease when the job has finished executing, we can monitor the counter like the inverse of a semaphore, and continue execution when all of the jobs have completed. This is good for batch jobs, but does not scale well for large numbers of jobs because the result of the job is delayed until the entire batch has executed.

GPU Parallelisation

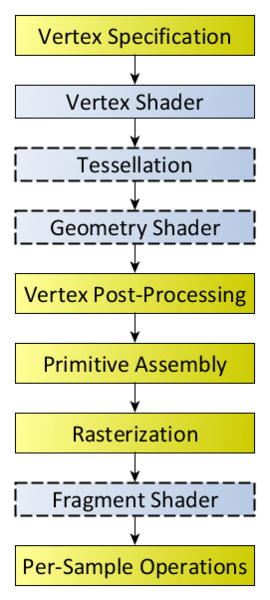

While the intricacies GPU parallelisation are out of scope for this wiki article, there are some basic ideas that can be discussed here. GPU work follows a set pipeline called the Rendering Pipeline. The OpenGL API will be the focus of this section.

Not all portions of the rendering pipeline are accessible by the programmer as they are handled automatically by OpenGL. The areas that the programmer can manipulate are: Vertext Shader, Tessellation, Geometry Shader, and the Fragment Shader. The ways to communicate with these rendering steps are through shader files written in GLSL (OpenGL Shading Language). GLSL is a very limited language which makes some optimizations difficult to implement, if not impossible. Communication from the CPU to the GPU takes time, there is latency inherent in the communication process, so minimizing communication is important. Usually communication with the GPU is only done when submitting a render job. GPUs automatically split up the work given to them by OpenGL, leaving optimizations to be done in the shaders. Passing less data to the GPU will give it less work to do overall, and will lessen the communication latency. This can be done though culling any objects that are unused (not in the player’s viewpoint), and structuring data in an optimal way for the GPU. The GPU doesn’t care about the internal animation state of the objects, it only cares about what it needs to render in the current frame, so only passing the vertex data instead of the entire model’s object would be optimal.

This topic expands can go just as deep as parallelising the CPU work, so further research will be needed for a more complete understanding of parallelising the GPU load.

Presentation

Media:Parallelisation_in_Game_Engines.pdf

Sources

- 2 S E UR CT E T Hi c r a NE I Ng e e m a G L E L ... - Maxwell. https://www.maxwell.vrac.puc-rio.br/16309/16309_3.PDF.

- Multithreading the Entire Destiny Engine - YouTube. https://www.youtube.com/watch?v=v2Q_zHG3vqg.

- GCAP 2016: Parallel Game Engine Design - Youtube.com. https://www.youtube.com/watch?v=JpmK0zu4Mts.

- Destiny's Multithreaded Rendering Architecture - YouTube. https://www.youtube.com/watch?v=0nTDFLMLX9k.

- Johan Andersson Follow Rendering Architect at DICE. “Parallel Futures of a Game Engine (v2.0).” SlideShare, https://www.slideshare.net/repii/parallel-futures-of-a-game-engine-v20.

- “Sponsored Feature: Designing the Framework of a Parallel Game Engine.” Gamasutra, https://www.gamasutra.com/view/feature/3941/sponsored_feature_designing_the_.php.

- “Rendering Pipeline Overview.” Rendering Pipeline Overview - OpenGL Wiki, https://www.khronos.org/opengl/wiki/Rendering_Pipeline_Overview.

- “Chapter 9. Advanced Thread Management · C++ Concurrency in Action: Practical Multithreading.” · C++ Concurrency in Action: Practical Multithreading, https://livebook.manning.com/book/c-plus-plus-concurrency-in-action/chapter

9/1.

- Gregory, Jason. Game Engine Architecture. 3rd ed., CRC Press, 2019.