GPU621/Intel DAAL

Intel DAAL

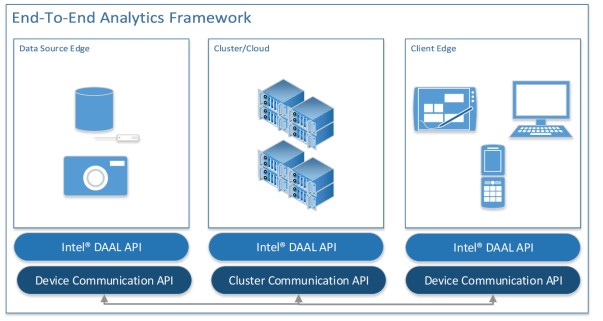

Intel Data Analytics is essentially a library which is optimized to work with large data sources and analytics. It covers the comprehensive range of tasks, from preprocessing, transformation, analysis, modeling, validation and decision making. This makes it quite flexible as it can be used in many end-to-end analytics frameworks.

Having a complete framework is a very powerful perk to have as we can be assured that all parts of the system will link together.

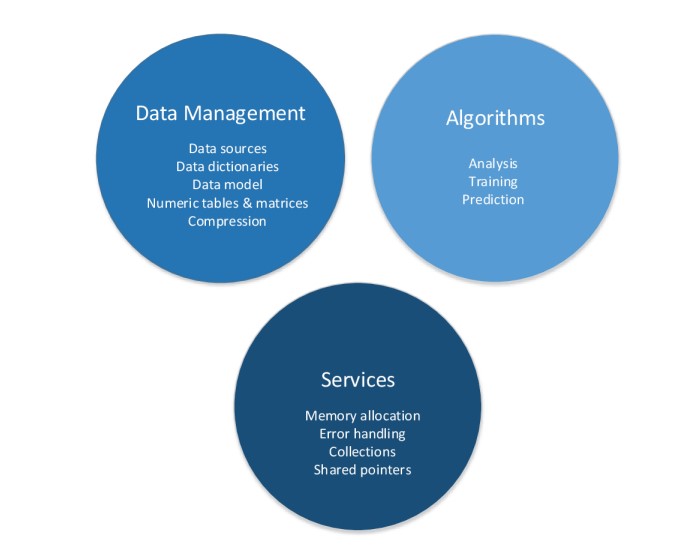

The framework is composed of 3 major components: data management + algorithms + services

Data management --

The algorithmic portion of the library supports three different methods of computing. These are in turn batch processing, online processing and distributed processing, which will be discussed later. To optimize performance the intel DAAL library takes and uses algorithms from the Math Kernel Library as well as the Intel Integrated Performance Primitives.

Services --

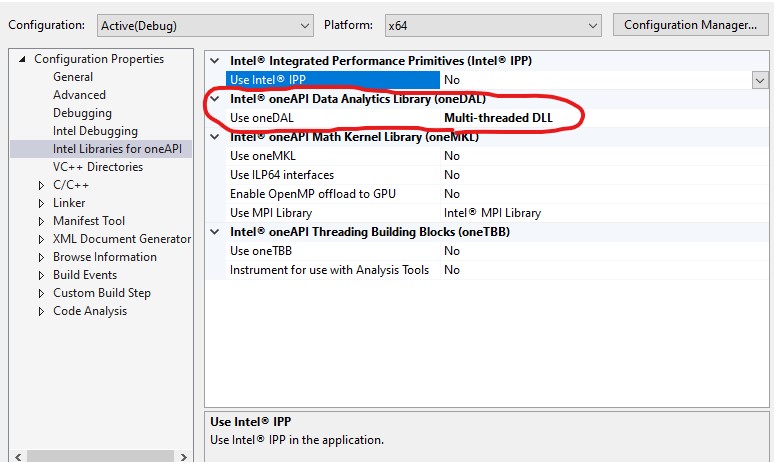

How to enable:

1) Download Intel oneAPI Base Toolkit

2) Project Properties -> intel libraries for oneAPI -> use oneDAL

Computation modes:

Batching:

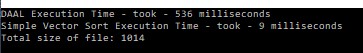

The majority of the library appears to be simple batch processing. I believe batch processing is the equivalent to serial code, where the algorithm works with the entire block of data at once. However, the library is still quite optimized even in these situations. For example the sort function:

We can see that the DAAL version versus a vector quick sort is much slower with a small data collection but as the data set gets larger and larger it starts to outperform the quick sort more and more.

Online:

For this type of computation DAAL supports online processing. In the online Processing method, chunks of data are fed into the algorithm sequentially. Not all of the data is accessed at once. This is of course very beneficial when working with large sets of data. As you can see in the example code, the number of rows in the block of code being extracted is defined. This is missing in the previous sort processing we just looked at which just took all of the data.

Distributed:

The final method of processing in the library is distributed processing. This is exactly what it sounds like, the library now forks different chunks of data to different compute nodes before finally rejoining all the data in one place. The example used here is K-means clustering, which is basically just modelling vectors and seeing where they end up clustering around.