Difference between revisions of "Team Darth Vector"

m (→List of TBB Algorithms:) |

(→TBB Background) |

||

| Line 19: | Line 19: | ||

==TBB Background== | ==TBB Background== | ||

| − | + | <TODO> | |

==STL Background== | ==STL Background== | ||

Revision as of 19:23, 13 December 2017

TEAM, use this for formatting. Wiki Editing Cheat Sheet

Join me, and together we can fork the problem as master and thread

Members

Alistair Godwin

Giorgi Osadze

Leonel Jara

Contents

Generic Programming

Generic Programming is a an objective when writing code to make algorithms reusable and with the least amount of specific code. An example of generic code is STL's templating functions which provide generic code that can be used with many different types without requiring much specific coding for the type( an addition template could be used for int, double, float, short, etc without requiring re-coding). A non-generic library requires types to be specified, meaning more type-specific code has to be created.

TBB Background

<TODO>

STL Background

List of STL Functions:

Algorithms Are supported by STL for various algorithms such as sorting, searching and accumulation. All can be found within the header "<algorithm>". Examples include sort() and reverse functions()

STL iterators Are supported for serial traversal. Should you use an iterator in parallel, you must be cautious to not change the data while a thread is going through the iterator. They are defined within the header "<iterator>" and is coded as

#include<iterator>

foo(){

vector<type> myVector;

vector<type>::iterator i;

for( i = myVector.begin(); i < myVector.end(); i++){

bar();

}

}

Containers STL supports a variety of containers for data storage. Generally these containers are supported in parallel for read actions, but does not safely support writing to the container with or without reading at the same time. There are several header files that are included such as "<vector>", "<queue>", and "<deque>".

List of TBB containers:

concurrent_queue : This is the concurrent version of the STL container Queue. This container supports first-in-first-out data storage like its STL counterpart. Multiple threads may simultaneously push and pop elements from the queue. Queue does NOT support and front() or back() in concurrent operations(the front could change while accessing). Also supports iterations, but are slow and are only intended for debug. This is defined within the header "tbb/concurrent_queue.h" and is coded as:#include <tbb/concurrent_queue.h> //....// tbb:concurrent_queue<typename> name;concurrent_vector : This is a container class for vectors with concurrent(parallel) support. These vectors do not support insertion or erase operations but support operations done by multiple threads. Note that when elements are inserted, they cannot be removed without calling the clear() member function on it, which removes every element in the array. This is defined within the header "tbb/concurrent_vector.h" and is coded as:

#include <tbb/concurrent_vector.h> //...// tbb:concurrent_vector<typename> name;

concurrent_hash_map : A container class that supports hashing in parallel. The generated keys are not ordered and there will always be at least 1 element for a key. Defined within "tbb/concurrent_hash_map.h"

List of TBB Algorithms:

Algorithms

parallel_for: Provides concurrent support for for loops. This allows data to be divided up into chunks that each thread can work on. The code is defined in "tbb/parallel_for.h" and takes the template of:

foo parallel_for(firstPos, lastPos, increment { boo()}

parallel_scan: Provides concurrent support for a parallel scan. Intel promises it may invoke the function up to 2 times the amount when compared to the serial algorithm. The code is defined in "tbb/parallel_scan.h" and according to intel takes the template of:

void parallel_scan( const Range& range, Body& body [, partitioner] );

tbb:parallel_invoke(myFuncA, myFuncB, myFuncC);

Threads

Lock Convoying Problem

What is a Lock?

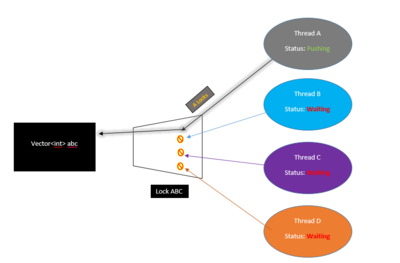

A Lock(also called "mutex") is a method for programmers to secure code that when executing in parallel can cause multiple threads to fight for a resource/container for some operation. When threads work in parallel to complete a task with containers, there is no indication when the thread reach the container and need to perform an operation on it. This causes problems when multiple threads are accessing the same place. When doing an insertion on a container with threads, we must ensure only 1 thread is capable of pushing to it or else threads may fight for control. By "Locking" the container, we ensure only 1 thread accesses at any given time.

To use a lock, you program must be working in parallel(ex #include <thread>) and should be completing something in parallel. You can find c++11 locks with #include <mutex>

Code example or Picture here ^_^

#include <iostream>

#include <thread>

#include <mutex>

//Some threads are spawned which call this function

//Declared the following within the class std::mutex NightsWatch;

void GameOfThronesClass::GuardTheWall(){

//Protect until Unlock() is called. Only 1 thread may do this below at a time. It is //"locked"

NightsWatch.Lock();

//Increment

DaysWithoutWhiteWalkerAttack++;

std::cout << "It has been " << DaysWithoutWhiteWalkerAttack << " since the last attack at Castle Black!\n";

//Allow Next thread to execute the above iteration

NightsWatch.Unlock();

}

Note that there can be problems with locks. If a thread is locked but it is never unlocked, any other threads will be forced to wait which may cause performance issues. Another problem is called "Dead Locking" where each thread may be waiting for another to unlock (and vice versa) and the program is forced to wait and wait .

Parallelism Problems & Convoying in STL

Within STL, issues arise when you attempt to access containers in parallel. With containers, when threads update the container say with push back, it is difficult to determine where the insertion occurred within the container(each thread is updating this container in any order) additionally, the size of the container is unknown as each thread may be updating the size as it goes (thread A may see a size of 4 while thread B a size of 9). For example, in a vector we can push some data to it in parallel but knowing where that data was pushed to requires us to iterate through the vector for the exact location . If we attempt to find the data in parallel with other operations ongoing, 1 thread could search for the data, but another could update the vector size during that time which causes problems with thread 1's search as the memory location may change should the vector need to grow(performs a deep copy to new memory).

Locks can solve the above issue but cause significant performance issues as the threads are forced to wait for each other. This performance hit is known as Lock Convoying.

Lock Convoying in TBB

TBB attempts to mitigate the performance issue from parallel code when accessing or completing an operation on a container through its own containers such as concurrent_vector. Through concurrent_vector, every time an element is accessed/changed, a return of the index location is given. TBB promises that any time an element is pushed, it will always be in the same location, no matter if the size of the vector changes in memory. With a standard vector, when the size of the vector changes, the data is copied over. If any threads are currently traversing this vector when the size changes, any iterators may no longer be valid.

Study Ref: https://software.intel.com/en-us/blogs/2008/10/20/tbb-containers-vs-stl-performance-in-the-multi-core-age This leads into Concurrent_vector growing below..

Efficiency Comparison Parallel for and concurrent_vector

If only you knew the power of the Building Blocks

Concept: Fine-grained locking

Multiple threads operate on the container by locking only those portions they really need to lock.

Concept: Lock-free algorithms

Bits of knowledge:

STL interfaces are inherently not thread-safe.

Threading Building Blocks containers are not templated with an allocator argument.

Links

http://www.cs.northwestern.edu/~riesbeck/programming/c++/stl-summary.html

http://www.cplusplus.com/reference/stl/

https://www.inf.ed.ac.uk/teaching/courses/ppls/TBBtutorial.pdf