Difference between revisions of "GPU621/Analyzing False Sharing"

Ryan Leong (talk | contribs) (→Example Of A False Sharing) |

Ryan Leong (talk | contribs) |

||

| Line 136: | Line 136: | ||

So the problem of false sharing sometimes cannot be generalized, and the occurrence of false sharing does not necessarily make performance lower. But no matter how the new block is the optimal solution. | So the problem of false sharing sometimes cannot be generalized, and the occurrence of false sharing does not necessarily make performance lower. But no matter how the new block is the optimal solution. | ||

| + | |||

| + | == example 2 == | ||

| + | |||

| + | If you think the above example is too limiting, not all cases can be abstracted to such a local variable. So let's let the two variables exist on different cache lines! | ||

| + | |||

| + | In this program code, we can easily see that the Number struct has two member variables (num1 and num2). They are for loop in two different threads to execute the increment operator 1000000 times. This time we use Linux with multiple cores to compile (note that here we are not using any optimization) | ||

Revision as of 13:35, 24 November 2022

Group Members

Example Of A False Sharing

#include <thread>

#include <vector>

#include <iostream>

#include <chrono>

using namespace std;

const int sizeOfNumbers = 10000; // Size of vector numbers

const int sizeOfSum = 2; // Size of vector sum

void sumUp(const vector<int> numbers, vector<long>& sum, int id) {

for (int i = 0; i < numbers.size(); i++) {

if (i % sizeOfSum == id) {

sum[id] += I;

}

}

// The output of this sum

cout << "sum " << id << " = " << sum[id] << endl;

}

int main() {

vector<int> numbers;

for (int i = 0; i < sizeOfNumbers; i++) { //Initialize vector numbers to 0 to 100

numbers.push_back(i);

}

cout << "-----Thread-----" << endl;

// Thread block

{

vector<long> sum(sizeOfSum, 0); //Set size=sizeOfSum and all to 0

auto start = chrono::steady_clock::now();

vector<thread> thread;

for (int i = 0; i < sizeOfSum; i++) {

thread.emplace_back(sumUp, numbers, ref(sum), i);

}

for (int i = 0; i < sizeOfSum; i++) {

thread[i].join();

}

auto end = chrono::steady_clock::now();

cout << "Thread time consumption: " << chrono::duration_cast<chrono::microseconds>(end - start).count() << "ms" << endl;

}

cout << endl << "-----Serial-----" << endl;

// Serial block

{

vector<long> sum(sizeOfSum, 0);

auto start = chrono::steady_clock::now();

for (int i = 0; i < sizeOfSum; i++) {

sumUp(numbers, sum, i);

}

auto end = chrono::steady_clock::now();

cout << "Serial time consumption: " << chrono::duration_cast<chrono::microseconds>(end - start).count() << "ms" << endl;

}

}

In this program code, we can see that at the beginning of the main function we declare a vector numbers and initialize it from 0 to 100. We can also see in the main function that the code is divided into two main blocks. The first block is the Thread block, which is executed concurrently using multiple threads. While the second block is the Serial block, it is executed concurrently using normal serial logic.

The main purpose of the sumUp function is to calculate the sum of odd elements or even elements based on the data in the first vector argument in the argument list. Also, the sum will be recorded in the corresponding position of the second vector argument using the int argument as the index.

Which block of code do you think will take less time?

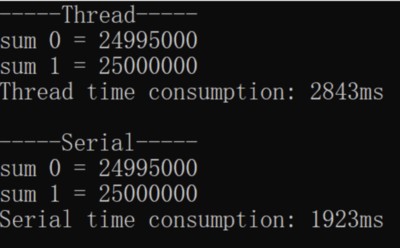

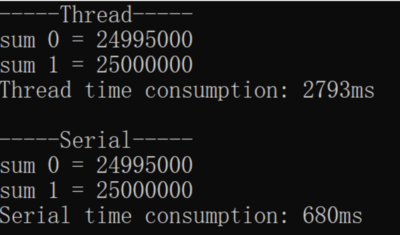

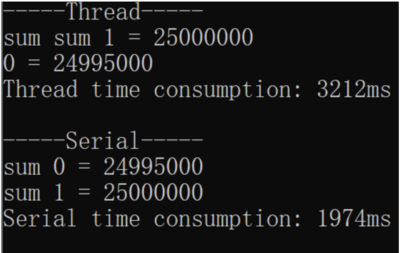

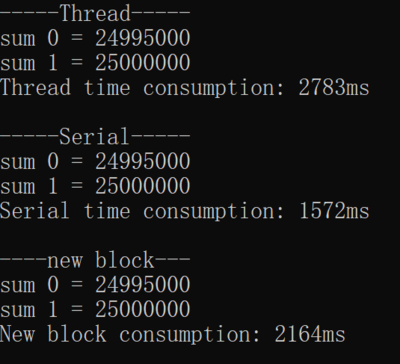

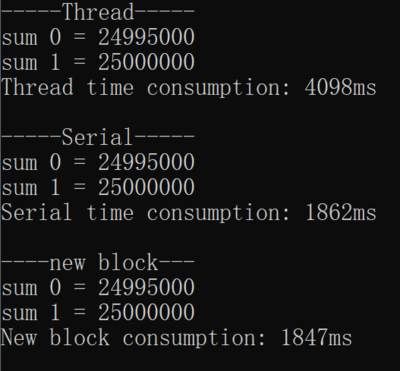

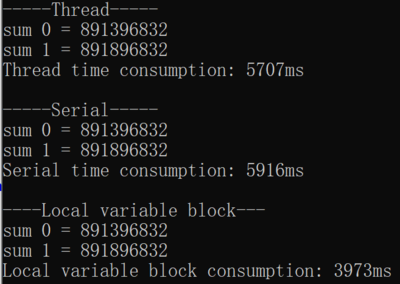

Theoretically, this code should be executed faster on a multicore machine with a Thread block than a serial block. But the result is.

Or

Or this

To our surprise, the serial block took much less time, no matter how many times I ran it. This turned our existing knowledge upside down, but don't worry, it's because you don't understand False Sharing yet.

As we said above, the smallest unit of CPU operation on the cache is the size of a cache line, which is 64 bytes. As you can see in our program code, the sum is a vector that stores two long data types. The two long data are in fact located on the same cache line. When two threads read and write sum[0] and sum[1] separately, it looks like they are not using the same variable, but in fact, they are affecting each other.For example, if a thread updates sum[0] in CPU0, this causes the cache line of sum[1] in CPU1 to be invalidated. This causes the program to take longer to run, as it needs to re-read the data.

We try to change the sizeOfSum to 8, which means that changing the size of the vector sum to 8 and the if condition in the sumUp function to i%8==id will give the program more data to process. Nevertheless, the result still does not bring the time between the Serial block and Thread block closer and the Serial block still takes less time.

What would it take to show the advantages of multicore? As you can see in our program, multiple threads are not operating on the same variable, but only a single thread is reading and writing a lot of variables in the same cache line, so you can introduce a local variable.

We implement another function newSumUp in the program code:

void newSumUP(const vector<int> numbers, vector<long>& sum, int id) {

long thisSum = 0;

for (int i = 0; i < numbers.size(); ++i) {

if (i % sizeOfSum == id) {

thisSum += i;

}

}

sum[id] = thisSum;

cout << "sum " << id << " = " << sum[id] << endl;

}

Also, we add the following code to our main function:

cout << endl << "----new block---" << endl;

// new block

{

vector<long> sum(sizeOfSum, 0);

auto start = chrono::steady_clock::now();

vector<thread> thread;

for (int i = 0; i < sizeOfSum; ++i) {

thread.emplace_back(newSumUP, numbers, ref(sum), i);

}

for (int i = 0; i < sizeOfSum; ++i) {

thread[i].join();

}

auto end = chrono::steady_clock::now();

cout << "New block consumption: " << chrono::duration_cast<chrono::microseconds>(end - start).count() << "ms" << endl;

}

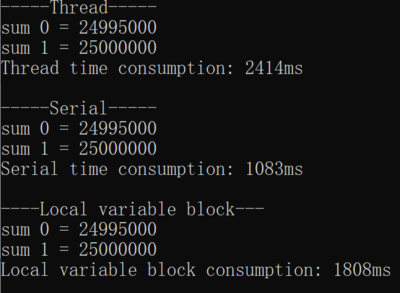

When we ran the program again, we came to this conclusion:

We can see that the new block is already much faster than the Thread block, and even comparable to the Serial block is almost the same. But overall the Serial block is still the least time-consuming because the new block still needs to incur extra overhead for thread creation and scheduling.

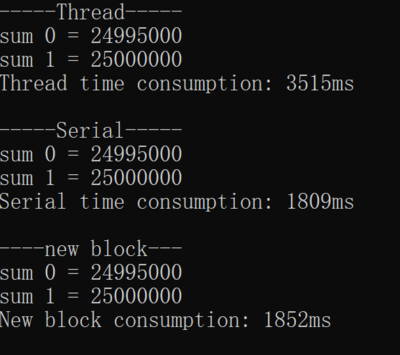

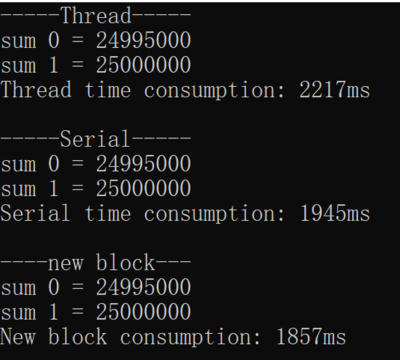

We could try increasing sizeOfNumbers to 1000000 as well, which would allow the program to process more data, thus compensating for the extra overhead of thread creation and scheduling.

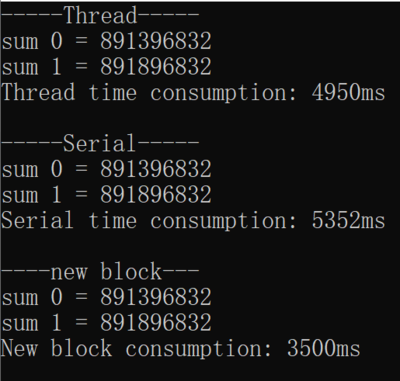

Now we can already see the advantage of multi-threading. Even when the vector numbers reach the size of 1000000, the Thread block even runs faster than the Serial block.

So the problem of false sharing sometimes cannot be generalized, and the occurrence of false sharing does not necessarily make performance lower. But no matter how the new block is the optimal solution.

example 2

If you think the above example is too limiting, not all cases can be abstracted to such a local variable. So let's let the two variables exist on different cache lines!

In this program code, we can easily see that the Number struct has two member variables (num1 and num2). They are for loop in two different threads to execute the increment operator 1000000 times. This time we use Linux with multiple cores to compile (note that here we are not using any optimization)