Difference between revisions of "GPU621/Analyzing False Sharing"

Ryan Leong (talk | contribs) |

Ryan Leong (talk | contribs) (→Example Of A False Sharing) |

||

| Line 61: | Line 61: | ||

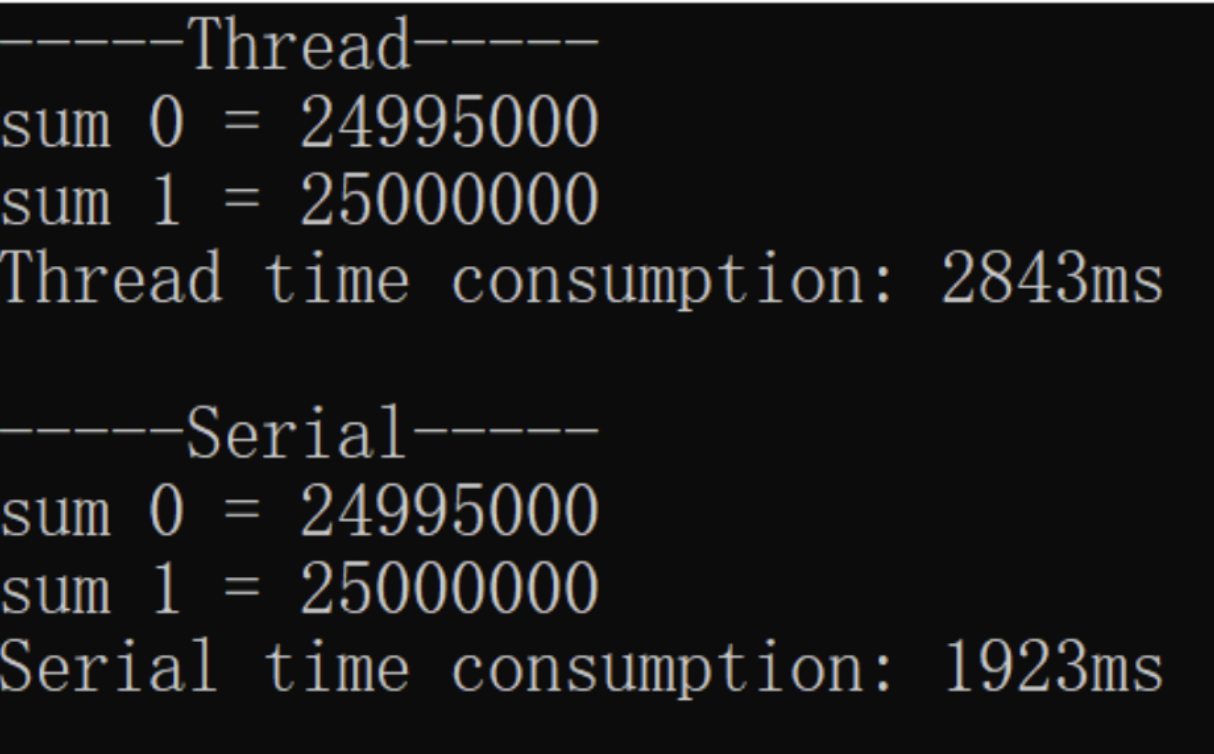

Theoretically, this code should be executed faster on a multicore machine with a Thread block than a serial block. But the result is. | Theoretically, this code should be executed faster on a multicore machine with a Thread block than a serial block. But the result is. | ||

| + | [[File:exampleOutput1.jpg|left]] | ||

Or this | Or this | ||

| + | |||

To our surprise, the serial block took much less time, no matter how many times I ran it. This turned our existing knowledge upside down, but don't worry, it's because you don't understand False Sharing yet. | To our surprise, the serial block took much less time, no matter how many times I ran it. This turned our existing knowledge upside down, but don't worry, it's because you don't understand False Sharing yet. | ||

As we said above, the smallest unit of CPU operation on the cache is the size of a cache line, which is 64 bytes. As you can see in our program code, the sum is a vector that stores two long data types. The two long data are in fact located on the same cache line. When two threads read and write sum[0] and sum[1] separately, it looks like they are not using the same variable, but in fact, they are affecting each other.For example, if a thread updates sum[0] in CPU0, this causes the cache line of sum[1] in CPU1 to be invalidated. This causes the program to take longer to run, as it needs to re-read the data. | As we said above, the smallest unit of CPU operation on the cache is the size of a cache line, which is 64 bytes. As you can see in our program code, the sum is a vector that stores two long data types. The two long data are in fact located on the same cache line. When two threads read and write sum[0] and sum[1] separately, it looks like they are not using the same variable, but in fact, they are affecting each other.For example, if a thread updates sum[0] in CPU0, this causes the cache line of sum[1] in CPU1 to be invalidated. This causes the program to take longer to run, as it needs to re-read the data. | ||

Revision as of 13:14, 23 November 2022

Group Members

Example Of A False Sharing

#include <thread>

#include <vector>

#include <iostream>

#include <chrono>

using namespace std;

const int sizeOfNumbers = 10000; // Size of vector numbers

const int sizeOfSum = 2; // Size of vector sum

void sumUp(const vector<int> numbers, vector<long>& sum, int id) {

for (int i = 0; i < numbers.size(); i++) {

if (i % sizeOfSum == id) {

sum[id] += I;

}

}

// The output of this sum

cout << "sum " << id << " = " << sum[id] << endl;

}

int main() {

vector<int> numbers;

for (int i = 0; i < sizeOfNumbers; i++) { //Initialize vector numbers to 0 to 100

numbers.push_back(i);

}

cout << "-----Thread-----" << endl;

{ // Thread

vector<long> sum(sizeOfSum, 0); //Set size=sizeOfSum and all to 0

auto start = chrono::steady_clock::now();

vector<thread> td;

for (int i = 0; i < sizeOfSum; i++) {

td.emplace_back(sumUp, numbers, ref(sum), I);

}

for (int i = 0; i < sizeOfSum; i++) {

td[i].join();

}

auto end = chrono::steady_clock::now();

cout << "Thread time consumption: " << chrono::duration_cast<chrono::microseconds>(end - start).count() << "ms" << endl;

}

cout << endl << "-----Serial-----" << endl;

{ // Serial

vector<long> sum(sizeOfSum, 0);

auto start = chrono::steady_clock::now();

for (int i = 0; i < sizeOfSum; i++) {

sumUp(numbers, sum, I);

}

auto end = chrono::steady_clock::now();

cout << "Serial time consumption: " << chrono::duration_cast<chrono::microseconds>(end - start).count() << "ms" << endl;

}

}

In this program code, we can see that at the beginning of the main function we declare a vector numbers and initialize it from 0 to 100. We can also see in the main function that the code is divided into two main blocks. The first block is the Thread block, which is executed concurrently using multiple threads. While the second block is the Serial block, it is executed concurrently using normal serial logic.

The main purpose of the sumUp function is to calculate the sum of odd elements or even elements based on the data in the first vector argument in the argument list. Also, the sum will be recorded in the corresponding position of the second vector argument using the int argument as the index.

Which block of code do you think will take less time?

Theoretically, this code should be executed faster on a multicore machine with a Thread block than a serial block. But the result is.

Or this

To our surprise, the serial block took much less time, no matter how many times I ran it. This turned our existing knowledge upside down, but don't worry, it's because you don't understand False Sharing yet.

As we said above, the smallest unit of CPU operation on the cache is the size of a cache line, which is 64 bytes. As you can see in our program code, the sum is a vector that stores two long data types. The two long data are in fact located on the same cache line. When two threads read and write sum[0] and sum[1] separately, it looks like they are not using the same variable, but in fact, they are affecting each other.For example, if a thread updates sum[0] in CPU0, this causes the cache line of sum[1] in CPU1 to be invalidated. This causes the program to take longer to run, as it needs to re-read the data.