Difference between revisions of "Alpha Centauri"

| (3 intermediate revisions by the same user not shown) | |||

| Line 8: | Line 8: | ||

== Introduction == | == Introduction == | ||

| − | Intel Data Analytics Acceleration Library, also known as Intel DAAL, is a library created by Intel in 2015 to solve problems associated with Big Data.<br/> | + | Intel Data Analytics Acceleration Library, also known as Intel DAAL, is a library created by Intel in 2015 to solve problems associated with Big Data and Machine Learning.<br/> |

| − | It is available for Linux, OS X and Windows platforms and it with the C++, Python, and Java | + | It is available for Linux, OS X and Windows platforms and it works with the following programming languages: C++, Python, and Java.<br/> |

It is also optimized to run on a wide range of devices ranging from home computers to data centers and it uses Vectorization to deliver the best performances.<br/> | It is also optimized to run on a wide range of devices ranging from home computers to data centers and it uses Vectorization to deliver the best performances.<br/> | ||

| Line 28: | Line 28: | ||

== How Intel DAAL Works == | == How Intel DAAL Works == | ||

| − | Intel DAAL comes pre-bundled with Intel® Parallel Studio XE and Intel® SystemStudio. It is also available as a stand-alone version and can be installed following these | + | Intel DAAL comes pre-bundled with Intel® Parallel Studio XE and Intel® SystemStudio. It is also available as a stand-alone version and can be installed following these [https://software.intel.com/en-us/get-started-with-daal-for-linux instructions].<br/> |

Intel DAAL is a simple and efficient solution to solve problems related to Big Data, Machine Learning, and Deep Learning.<br/> | Intel DAAL is a simple and efficient solution to solve problems related to Big Data, Machine Learning, and Deep Learning.<br/> | ||

The reasoning behind that is because it handles all the complex and tedious algorithms for you and software developers only have to worry about feeding the Data and follow the Data Analytics Ecosystem Flow.<br/> | The reasoning behind that is because it handles all the complex and tedious algorithms for you and software developers only have to worry about feeding the Data and follow the Data Analytics Ecosystem Flow.<br/> | ||

| Line 35: | Line 35: | ||

Following, there are some pictures that show how Intel DAAL works in greater detail. | Following, there are some pictures that show how Intel DAAL works in greater detail. | ||

| − | + | [[File:Daal-flow.png]] <br/> | |

| − | + | This picture shows the Data Flow in Intel DAAL. | |

| + | The picture shows the data being fed to the program and all the steps that Intel DAAL goes through when processing the data. | ||

[[File:DaalModel.png|500px]] <br/> | [[File:DaalModel.png|500px]] <br/> | ||

This picture shows the Intel DAAL Model(Data Management, Algorithms, and Services). | This picture shows the Intel DAAL Model(Data Management, Algorithms, and Services). | ||

This model represents all the functionalities that Intel DAAL offers from grabbing the data to making a final decision. | This model represents all the functionalities that Intel DAAL offers from grabbing the data to making a final decision. | ||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

[[File:DAALDataflow.PNG]] <br/> | [[File:DAALDataflow.PNG]] <br/> | ||

Latest revision as of 12:50, 22 December 2017

"The world is one big data problem." -cit. Andrew McAfee

Contents

Intel Data Analytics Acceleration Library

Team Members

Introduction

Intel Data Analytics Acceleration Library, also known as Intel DAAL, is a library created by Intel in 2015 to solve problems associated with Big Data and Machine Learning.

It is available for Linux, OS X and Windows platforms and it works with the following programming languages: C++, Python, and Java.

It is also optimized to run on a wide range of devices ranging from home computers to data centers and it uses Vectorization to deliver the best performances.

Intel DAAL helps speed big data analytics by providing highly optimized algorithmic building blocks for all data analysis stages and by supporting different processing modes.

The data analysis stages covered are:

- Pre-processing

- Transformation

- Analysis

- Modeling

- Validation

- Decision Making

The different processing modes are:

- Batch processing - Data is stored in memory and processed all at once.

- Online processing - Data is processed in chunks and then the partial results are combined during the finalizing stage. This is also called Streaming.

- Distributed processing - Similarly to MapReduce Consumers in a cluster process local data (map stage), and then the Producer process collects and combines partial results from Consumers (reduce stage). Developers can choose to use the data movement in a framework such as Hadoop or Spark, or explicitly coding communications using MPI.

How Intel DAAL Works

Intel DAAL comes pre-bundled with Intel® Parallel Studio XE and Intel® SystemStudio. It is also available as a stand-alone version and can be installed following these instructions.

Intel DAAL is a simple and efficient solution to solve problems related to Big Data, Machine Learning, and Deep Learning.

The reasoning behind that is because it handles all the complex and tedious algorithms for you and software developers only have to worry about feeding the Data and follow the Data Analytics Ecosystem Flow.

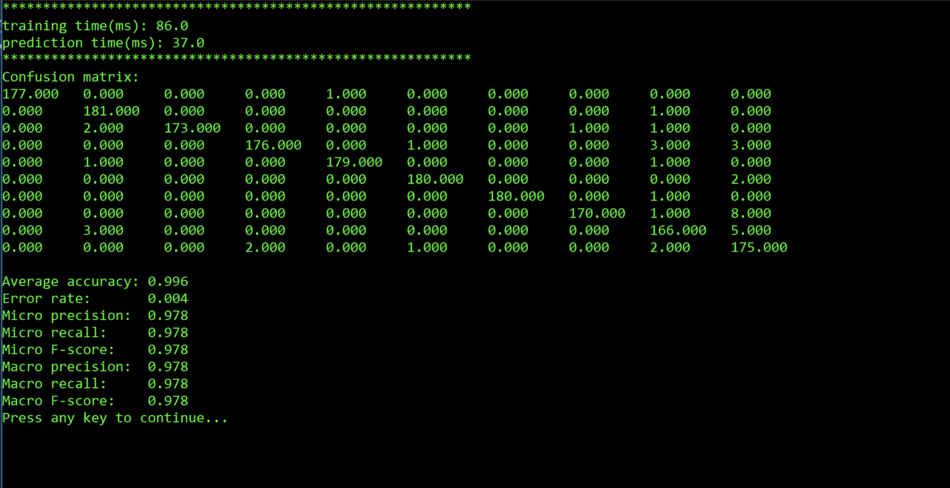

Following, there are some pictures that show how Intel DAAL works in greater detail.

This picture shows the Data Flow in Intel DAAL.

The picture shows the data being fed to the program and all the steps that Intel DAAL goes through when processing the data.

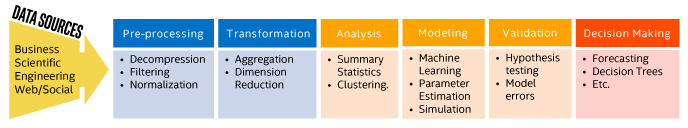

This picture shows the Intel DAAL Model(Data Management, Algorithms, and Services).

This model represents all the functionalities that Intel DAAL offers from grabbing the data to making a final decision.

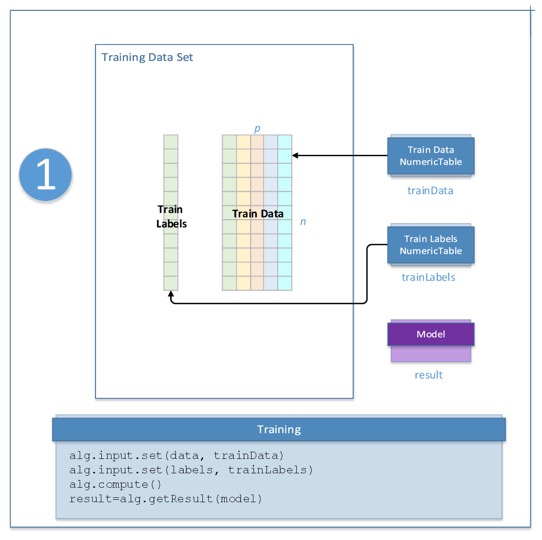

This picture shows how Intel DAAL processes the data.

Intel DAAL in handwritten digit recognition

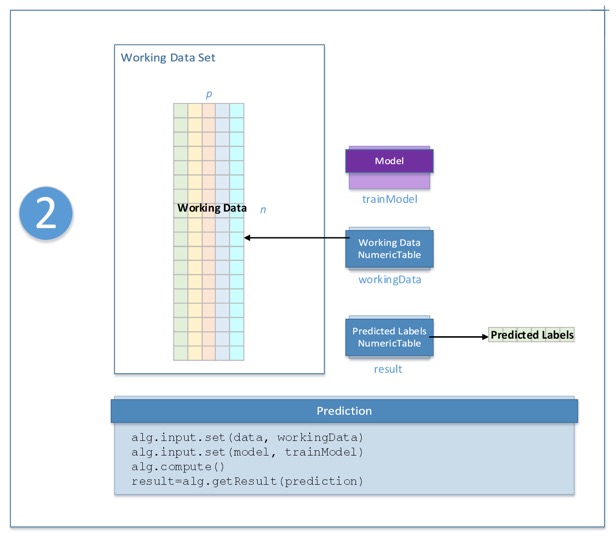

A very good type of machine learning problem is handwritten digit recognition. Intel DAAL does a good job at solving this problem by providing several relevant application algorithms such as Support Vector Machine (SVM), Principal Component Analysis (PCA), Naïve Bayes, and Neural Networks. Below there is an example that uses SVM to solve this problem.

Recognition is essentially the prediction in the machine learning pipeline. When given a handwritten digit, the system should be able to determine which digit had been written. In order for a system to be able to predict the output with a set of data, it needs a trained model learned from the training data set that would provide the system with the capability to make a calculated prediction.

The first step before constructing a training model is to collect training data from the given data within the .csv data set files

Loading Data in Intel DAAL

Setting Training and Testing Files:

string trainDatasetFileName = "digits_tra.csv";

string trainGroundTruthFileName = "digits_tra_labels.csv";

string testDatasetFileName = "digits_tes.csv";

string testGroundTruthFileName = "digits_tes_labels.csv";Setting Training and Prediction Models

services::SharedPtr<svm::training::Batch<> > training(new svm::training::Batch<>());

services::SharedPtr<svm::prediction::Batch<> > prediction(new svm::prediction::Batch<>());Setting Training and Prediction Algorithm Models

services::SharedPtr<multi_class_classifier::training::Result> trainingResult;

services::SharedPtr<classifier::prediction::Result> predictionResult;Setting up Kernel Function Parameters for Multi-Class Classifier

kernel_function::rbf::Batch<> *rbfKernel = new kernel_function::rbf::Batch<>();

services::SharedPtr<kernel_function::KernelIface> kernel(rbfKernel);

services::SharedPtr<multi_class_classifier::quality_metric_set::ResultCollection> qualityMetricSetResult;Initializing Numeric Tables for Predicted and Ground Truth

services::SharedPtr<NumericTable> predictedLabels;

services::SharedPtr<NumericTable> groundTruthLabels;

Training Data in Intel DAAL

Initialize FileDataSource<CSVFeatureManager> to retrieve input data from .csv file

FileDataSource<CSVFeatureManager> trainDataSource(trainDatasetFileName,

DataSource::doAllocateNumericTable,

DataSource::doDictionaryFromContext);

FileDataSource<CSVFeatureManager> trainGroundTruthSource(trainGroundTruthFileName,

DataSource::doAllocateNumericTable,

DataSource::doDictionaryFromContext);Load data from files

trainDataSource.loadDataBlock(nTrainObservations);

trainGroundTruthSource.loadDataBlock(nTrainObservations);Initialize algorithm object for SVM training

multi_class_classifier::training::Batch<> algorithm;Setting algorithm parameters

algorithm.parameter.nClasses = nClasses;

algorithm.parameter.training = training;

algorithm.parameter.prediction = prediction;Pass dependent parameters and training data to the algorithm

algorithm.input.set(classifier::training::data, trainDataSource.getNumericTable());

algorithm.input.set(classifier::training::labels, trainGroundTruthSource.getNumericTable());Retrieving results from algorithm

trainingResult = algorithm.getResult();Serialize the learned model into a disk file. The training data from trainingResult is written to the model.

ModelFileWriter writer("./model");

writer.serializeToFile(trainingResult->get(classifier::training::model));

Testing The Trained Model

Initialize testDataSource to retrieve test data from a .csv file

FileDataSource<CSVFeatureManager> testDataSource(testDatasetFileName,

DataSource::doAllocateNumericTable,

DataSource::doDictionaryFromContext);

testDataSource.loadDataBlock(nTestObservations);Initialize algorithm object for prediction of SVM values

multi_class_classifier::prediction::Batch<> algorithm;Setting algorithm parameters

algorithm.parameter.nClasses = nClasses;

algorithm.parameter.training = training;

algorithm.parameter.prediction = prediction;Pass into the algorithm the testing data and trained model

algorithm.input.set(classifier::prediction::data, testDataSource.getNumericTable());

algorithm.input.set(classifier::prediction::model,trainingResult->get(classifier::training::model));Retrieve results from algorithm

predictionResult = algorithm.getResult();

Testing the Quality of the Model

Initialize testGroundTruth to retrieve ground truth test data from .csv file

FileDataSource<CSVFeatureManager> testGroundTruth(testGroundTruthFileName,

DataSource::doAllocateNumericTable,

DataSource::doDictionaryFromContext);

testGroundTruth.loadDataBlock(nTestObservations);Retrieve label for ground truth

groundTruthLabels = testGroundTruth.getNumericTable();Retrieve prediction label

predictedLabels = predictionResult->get(classifier::prediction::prediction);Create quality metric object to quantitate quality metrics of the classifier algorithm

multi_class_classifier::quality_metric_set::Batch qualityMetricSet(nClasses);

services::SharedPtr<multiclass_confusion_matrix::Input> input =

qualityMetricSet.getInputDataCollection()->getInput(multi_class_classifier::quality_metric_set::confusionMatrix);

input->set(multiclass_confusion_matrix::predictedLabels, predictedLabels);

input->set(multiclass_confusion_matrix::groundTruthLabels, groundTruthLabels);Compute quality

qualityMetricSet.compute();Retrieve quality results

qualityMetricSetResult = qualityMetricSet.getResultCollection();