Difference between revisions of "Team Darth Vector"

m (→Lock Convoying in TBB) |

(→Business Point of View Comparison for STL and TBB) |

||

| (35 intermediate revisions by one other user not shown) | |||

| Line 1: | Line 1: | ||

| − | |||

| + | '''GPU621 Darth Vector: C++11 STL vs TBB Case Studies''' | ||

''Join me, and together we can fork the problem as master and thread'' | ''Join me, and together we can fork the problem as master and thread'' | ||

| Line 16: | Line 16: | ||

==Generic Programming== | ==Generic Programming== | ||

| − | Generic Programming is a an objective when writing code to make algorithms reusable and with the least amount of specific code. An example of generic code is STL's templating functions which provide generic code that can be used with many different types without requiring much specific coding for the type( an addition template could be used for int, double, float, short, etc without requiring re-coding). A non-generic library requires types to be specified, meaning more type-specific code has to be created. | + | Generic Programming is a an objective when writing code to make algorithms reusable and with the least amount of specific code. Intel describes generic programming as "''writing the best possible algorithms with the least constraints''". An example of generic code is STL's templating functions which provide generic code that can be used with many different types without requiring much specific coding for the type(an addition template could be used for int, double, float, short, etc without requiring re-coding). A non-generic library requires types to be specified, meaning more type-specific code has to be created. |

[[File:gputemplates.PNG |thumb|center|600px| An example of generic coding]] | [[File:gputemplates.PNG |thumb|center|600px| An example of generic coding]] | ||

| Line 33: | Line 33: | ||

After a long period of engineering and development of the library, it obtained final approval in July 1994 to become part of the language standard. | After a long period of engineering and development of the library, it obtained final approval in July 1994 to become part of the language standard. | ||

| − | == | + | ==A Comparison from STL == |

| + | In general, most of STL is intended for use within a serial environment. This however changes with C++17's introduction of parallel algorithms. | ||

<u>'''Algorithms'''</u> | <u>'''Algorithms'''</u> | ||

| Line 54: | Line 55: | ||

<u>'''Containers'''</u> | <u>'''Containers'''</u> | ||

| − | STL supports a variety of containers for data storage. Generally these containers are supported in parallel for read actions, but does not safely support writing to the container with or without reading at the same time. There are several header files that are included such as "'''<vector>'''", "'''<queue>'''", and "'''<deque>'''". | + | STL supports a variety of containers for data storage. Generally these containers are supported in parallel for read actions, but does not safely support writing to the container with or without reading at the same time. There are several header files that are included such as "'''<vector>'''", "'''<queue>'''", and "'''<deque>'''".'''Most STL containers do not support concurrent operations upon them.''' |

They are coded as: | They are coded as: | ||

<pre> | <pre> | ||

| Line 68: | Line 69: | ||

</pre> | </pre> | ||

| − | + | <u>'''Memory Allocater'''</u> | |

| + | The use of a memory allocator allows more precise control over how dynamic memory is created and used. It is the default memory allocation method(ie not the "new" keyword) for all STL containers. The allocaters allow memory to scale rather then using delete and 'new' to manage a container's memory. They are defined within the header file "'''<memory>'''" and are coded as: | ||

| + | <pre> | ||

| + | #include <memory> | ||

| − | '''<u>concurrent_queue</u>''' : This is the concurrent version of the STL container Queue. This container supports first-in-first-out data storage like its STL counterpart. Multiple threads may simultaneously push and pop elements from the queue. Queue does NOT support and front() or back() in concurrent operations(the front could change while accessing). Also supports iterations, but are slow and are only intended for | + | void foo(){ |

| + | std::allocater<type> name; | ||

| + | } | ||

| + | </pre> | ||

| + | |||

| + | ==A Comparison from TBB== | ||

| + | ===Containers=== | ||

| + | '''<u>concurrent_queue</u>''' : This is the concurrent version of the STL container Queue. This container supports first-in-first-out data storage like its STL counterpart. Multiple threads may simultaneously push and pop elements from the queue. Queue does NOT support and front() or back() in concurrent operations(the front could change while accessing). Also supports iterations, but are slow and are only intended for debugging a program. This is defined within the header "'''tbb/concurrent_queue.h'''" and is coded as: <pre> | ||

#include <tbb/concurrent_queue.h> | #include <tbb/concurrent_queue.h> | ||

//....// | //....// | ||

tbb:concurrent_queue<typename> name; </pre> | tbb:concurrent_queue<typename> name; </pre> | ||

| − | '''<u>concurrent_vector</u>''' : This is a container class for vectors with concurrent(parallel) support. These vectors do not support | + | '''<u>concurrent_vector</u>''' : This is a container class for vectors with concurrent(parallel) support. These vectors do not support erase operations but do support operations done by multiple threads such as push_back(). Note that when elements are inserted, they cannot be removed without calling the clear() member function on it, which removes every element in the array. The container when storing elements does not guarantee that elements will be stored in consecutive addresses in memory. This is defined within the header "'''tbb/concurrent_vector.h'''" and is coded as: <pre> |

#include <tbb/concurrent_vector.h> | #include <tbb/concurrent_vector.h> | ||

//...// | //...// | ||

| Line 83: | Line 94: | ||

'''<u>concurrent_hash_map</u>''' : A container class that supports hashing in parallel. The generated keys are not ordered and there will always be at least 1 element for a key. Defined within "'''tbb/concurrent_hash_map.h'''" | '''<u>concurrent_hash_map</u>''' : A container class that supports hashing in parallel. The generated keys are not ordered and there will always be at least 1 element for a key. Defined within "'''tbb/concurrent_hash_map.h'''" | ||

| − | == | + | ===Algorithms=== |

| + | |||

<u>'''parallel_for:'''</u> Provides concurrent support for for loops. This allows data to be divided up into chunks that each thread can work on. The code is defined in "'''tbb/parallel_for.h'''" and takes the template of: | <u>'''parallel_for:'''</u> Provides concurrent support for for loops. This allows data to be divided up into chunks that each thread can work on. The code is defined in "'''tbb/parallel_for.h'''" and takes the template of: | ||

<pre> | <pre> | ||

| Line 95: | Line 107: | ||

</pre> | </pre> | ||

| − | |||

<u>'''parallel_invoke:'''</u> Provides support for parallel calling to functions provided in the arguments. It is defined within the header "'''tbb/parallel_invoke.h'''" and is coded as: <pre> | <u>'''parallel_invoke:'''</u> Provides support for parallel calling to functions provided in the arguments. It is defined within the header "'''tbb/parallel_invoke.h'''" and is coded as: <pre> | ||

| Line 101: | Line 112: | ||

</pre> | </pre> | ||

| − | == | + | ===Allocaters=== |

| − | == | + | Handles memory allocation for concurrent containers. In particular is used to help resolve issues that affect parallel programming. Called '''scalable_allocater<type>''' and '''cache_aligned_allocater<type>'''. Defined in "'''#include <tbb/scalable_allocator.h>'''" |

| − | + | ==TBB Memory Allocation & Fixing Issues from Parallel Programming== | |

| + | TBB provides memory allocation just like in STL via the '''std::allocater''' template class. Where TBB's allocater though improves, is through its expanded support for common issues experienced in parallel programming. These allocaters are called '''scalable_allocater<type>''' and '''cache_aligned_allocater<type>''' and ensure that issues like '''Scalability''' and '''False Sharing''' performance problems are reduced. | ||

| − | + | ===False Sharing=== | |

| + | As you may have seen from the workshop "False Sharing" a major performance hit can occur in parallel when data that sits on the same cache line in memory is used by two threads. When threads are attempting operations on the same cache line the threads will compete for access and will move the cache line around. The time taken to move the line is a significant amount of clock cycles which causes the performance problem. Through TBB, Intel created an allocated known as '''cache_aligned_allocater<type>'''. When used, any objects with memory allocation from it will never encounter false sharing. Note that if only 1 object is allocated by this allocater, false sharing may still occur. For compatability's sake(so that programmers can simply use "find and replace"), the cache_aligned_allocater takes the same arguments as the STL allocater. If you wish to use the allocater with STL containers, you only need to set the 2nd argument as the cache_allocater object. | ||

| − | + | The following is an example provided by Intel to demonstrate this: | |

<pre> | <pre> | ||

| − | + | std::vector<int,cache_aligned_allocator<int> >; | |

| − | + | </pre> | |

| − | |||

| − | + | ===Scaling Issue=== | |

| − | + | When working in parallel, several threads may be required to access shared memory which causes a performance slow down from forcing a single thread to allocate memory while other threads are required to wait. Intel describes this issue in parallel programming as '''Scalability''' and answers the issue with '''scalable_allocater<type>''' which permits concurrent memory allocation and is considered ideal for "''programs the rapidly allocate and free memory''". | |

| − | |||

| − | + | ==Lock Convoying Problem== | |

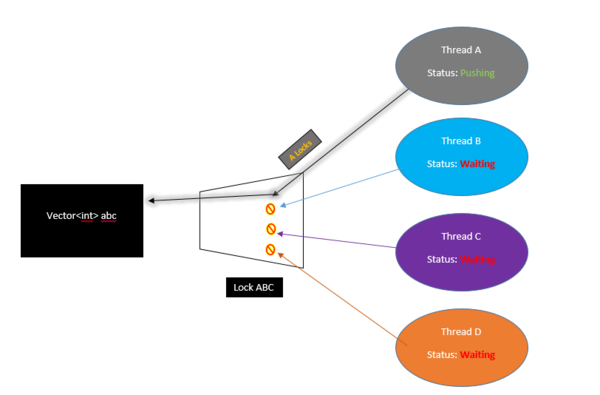

| − | + | ===What is a Lock?=== | |

| − | + | A Lock(also called "mutex") is a method for programmers to secure code that when executing in parallel can cause multiple threads to fight for a resource/container for some operation. When threads work in parallel to complete a task with containers, there is no indication when the thread reach the container and need to perform an operation on it. This causes problems when multiple threads are accessing the same place. When doing an insertion on a container with threads, we must ensure only 1 thread is capable of pushing to it or else threads may fight for control. By "Locking" the container, we ensure only 1 thread accesses at any given time. | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| + | To use a lock, you program must be working in parallel(ex #include <thread>) and should be completing something in parallel. You can find c++11 locks with #include <mutex> | ||

| − | + | [[File:Gpulockwhat.PNG |thumb|center|700px| Mutex Example]] | |

| − | |||

Note that there can be problems with locks. If a thread is locked but it is never unlocked, any other threads will be forced to wait which may cause performance issues. Another problem is called "Dead Locking" where each thread may be waiting for another to unlock (and vice versa) and the program is forced to wait and wait . | Note that there can be problems with locks. If a thread is locked but it is never unlocked, any other threads will be forced to wait which may cause performance issues. Another problem is called "Dead Locking" where each thread may be waiting for another to unlock (and vice versa) and the program is forced to wait and wait . | ||

| Line 140: | Line 146: | ||

If we attempt to find the data in parallel with other operations ongoing, 1 thread could search for the data, but another could update the vector size during that time which causes problems with thread 1's search as the memory location may change should the vector need to grow(performs a deep copy to new memory). | If we attempt to find the data in parallel with other operations ongoing, 1 thread could search for the data, but another could update the vector size during that time which causes problems with thread 1's search as the memory location may change should the vector need to grow(performs a deep copy to new memory). | ||

| − | Locks can solve the above issue but cause significant performance issues as the threads are forced to wait for each other. This performance hit is known as '''Lock Convoying'''. | + | Locks can solve the above issue but cause significant performance issues as the threads are forced to wait for each other before continuing. This performance hit is known as '''Lock Convoying'''. |

| − | [[File:DarthVector ThreadLock.PNG |thumb|center| | + | [[File:DarthVector ThreadLock.PNG |thumb|center|600px| Performance issues inside STL]] |

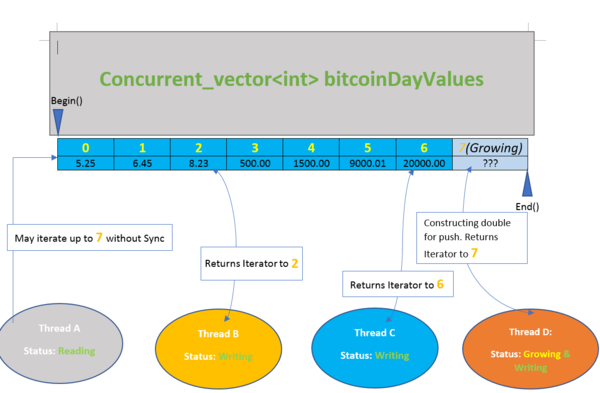

===Lock Convoying in TBB=== | ===Lock Convoying in TBB=== | ||

TBB attempts to mitigate the performance issue from parallel code when accessing or completing an operation on a container through its own containers such as concurrent_vector. | TBB attempts to mitigate the performance issue from parallel code when accessing or completing an operation on a container through its own containers such as concurrent_vector. | ||

| − | Through '''concurrent_vector''', every time an element is accessed/changed, a return of the index location is given. TBB promises that any time an element is pushed, it will always be in the same location, no matter if the size of the vector changes in memory. With a standard vector, when the size of the vector changes, the data is copied over. If any threads are currently traversing this vector when the size changes, any iterators may no longer be valid. | + | Through '''concurrent_vector''', every time an element is accessed/changed, a return of the index location is given. TBB promises that any time an element is pushed, it will always be in the same location, no matter if the size of the vector changes in memory. With a standard vector, when the size of the vector changes, the data is copied over. If any threads are currently traversing this vector when the size changes, any iterators may no longer be valid. This support also goes further for containers so that multiple threads can iterate through the container while another thread may be growing the container. An interesting catch though is that anything iterating may iterate over objects that are being constructed, ensuring construction and access remain synchronized. |

| + | |||

| + | [[File:Gpuconcur.PNG |thumb|center|600px| concurrent_vector use with multiple threads]] | ||

| − | + | TBB also provides its own versions of the mutex such as ''spin_mutex'' for when mutual exclusion is still required. | |

You can find more information on convoying and containers here: https://software.intel.com/en-us/blogs/2008/10/20/tbb-containers-vs-stl-performance-in-the-multi-core-age | You can find more information on convoying and containers here: https://software.intel.com/en-us/blogs/2008/10/20/tbb-containers-vs-stl-performance-in-the-multi-core-age | ||

| Line 257: | Line 265: | ||

===Why was TBB slower for Int?=== | ===Why was TBB slower for Int?=== | ||

| − | When dealing with primitive types like int, the actual operation to push back is not very complex; meaning that a serial process can complete the push back quite quickly. The vector can also likely hold a lot more data before requiring to reallocate its memory. TBB's practicality comes when performing a more complex action on a primitive type or from a simple action on a more complex type. Parallel overhead(the resources required to support tbb in parallel) may also increase the time required which could be the cause of the slowdown in the above comparison. | + | When dealing with primitive types like int, the actual operation to push back is not very complex; meaning that a serial process can complete the push back quite quickly. The vector can also likely hold a lot more data before requiring to reallocate its memory. TBB's practicality comes when performing a more complex action on a primitive type or from a simple action on a more complex type. Parallel overhead(the resources required to support tbb in parallel) may also increase the time required which could be the cause of the slowdown in the above comparison. TBB also provides automatic chunking and describes the benifit for use of parallel_for is 1 million clock cycles. |

==Business Point of View Comparison for STL and TBB== | ==Business Point of View Comparison for STL and TBB== | ||

{| class="wikitable collapsible collapsed" style="text-align: left;margin:0px;" | {| class="wikitable collapsible collapsed" style="text-align: left;margin:0px;" | ||

| − | |||

===Which library is better depending the on the use case?=== | ===Which library is better depending the on the use case?=== | ||

| − | |||

| − | + | The real question is when should you parallelize your code, or to just keep it serial? | |

| − | + | TBB is for multi-threading and STL is for single threading workloads. The fastest known serial algorithm maybe difficult or impossible to parallelize. | |

| − | |||

| − | + | Some aspects to look out for when parallelizing your code are; | |

| − | + | *Overhead whether it maybe in communication, idling, load imbalance, synchronization, and excess computation | |

| − | + | *Efficiency which is the measure of processor utilization in a parallel program | |

| − | + | *Scalability the efficiency can be kept constant as the number of processing elements is increased, provided that the problem size is increased | |

| + | *Correct Problem Size, when testing for efficiency, it may show poor efficiency if the problem size is too small. So, you would want to use serial instead, if the problem | ||

| + | size is always small. If you have a large problem size and has great efficiency, then parallel is the way to go | ||

| − | |||

| − | |||

| − | + | Resource: http://ppomorsk.sharcnet.ca/Lecture_2_d_performance.pdf | |

| − | |||

| − | + | === Identifying the worries and responsibilities === | |

| − | + | The increasing complexity of your code is a natural problem when working in parallel. Knowing the responsibilities as in what you must worry about as a developer is key. When trying quickly implement parallel regions in your code, or to just to keep your code serial. | |

| + | ====STL and the Threading Libraries==== | ||

| − | + | If you are going to try to parallelize your code using STL coupled with the threading libraries this is what you must worry: | |

| − | + | ||

| − | + | *Thread Creation, terminating, and synchronizing, partitioning, and management must be handled by you. This increases the work load and the complexity, the thread creation and overall resource for STL is managed by a combination of libraries. | |

| − | + | ||

| − | *Thread Creation, terminating, and synchronizing, partitioning | + | *Dividing collection of data is more of the problem when using the STL containers. |

| − | + | ||

| − | *Dividing collection of data is more of the problem when using the STL containers. | + | *C++11 does not have any parallel algorithms. So, any common parallel patterns such as; map, scan, reduce, must be implemented by yourself, or by another library. Though C++17 will have some parallel algorithms like scan, map, and reduce. |

| − | *C++11 does not have any parallel algorithms. So, any common parallel patterns such as; map, scan, reduce, must be implemented by yourself, or by another library. | ||

| − | |||

'''What you don’t need to worry about''' | '''What you don’t need to worry about''' | ||

*Making sorting, searching algorithms. | *Making sorting, searching algorithms. | ||

| − | *Partitioning data | + | *Partitioning data |

*Array algorithms; like copying, assigning, and checking data | *Array algorithms; like copying, assigning, and checking data | ||

| Line 316: | Line 319: | ||

Note all algorithms is done in serial, and may not be thread safe | Note all algorithms is done in serial, and may not be thread safe | ||

| − | ====TBB==== | + | ====TBB Worries and Responsibilities==== |

| − | Thread Creation, terminating, | + | *Thread Creation, terminating, synchronizing, partitioning, thread creation, and management is managed by TBB. |

| − | * | + | *Own Parallel algorithms (makes you need not to worry about the heavy constructs of threads that are present in the lower levels of programming. simple map, scan, pipeline, or reduce TBB has you covered |

| − | + | *Dividing collection of data, the block range coupled with it algorithms makes it simpler to divide the data | |

'''Benefit''' | '''Benefit''' | ||

| Line 330: | Line 333: | ||

The downside of TBB is since much of the close to hard hardware management is done be hide the scenes, it makes you has a developer have less control on finetuning your program. Unlike how STL with the threading library allows you to do. | The downside of TBB is since much of the close to hard hardware management is done be hide the scenes, it makes you has a developer have less control on finetuning your program. Unlike how STL with the threading library allows you to do. | ||

| + | |||

| + | |||

| + | ===Licensing=== | ||

| + | TBB is dual-licensed as of September 2016 | ||

| + | |||

| + | *COM license as part of suites products. Offers one year of technical support and products updates | ||

| + | |||

| + | *Apache v2.0 license for Open source code. Allows the user of the software the freedom to use the software for any purpose, to distribute it, to modify it, and to distribute modified versions of the software, under the terms of the license, without concern for royalties. | ||

| + | |||

| + | |||

| + | ===Companies and Products that uses TBB=== | ||

| + | *DreamWorks (DreamWorks Fur Shader) | ||

| + | |||

| + | *Blue Sky Studios (animation and simulation software) | ||

| + | |||

| + | *Pacific Northwest National Laboratory (Ultrasound products) | ||

| + | |||

| + | *More: https://software.intel.com/en-us/intel-tbb/reviews | ||

Latest revision as of 17:09, 17 December 2017

GPU621 Darth Vector: C++11 STL vs TBB Case Studies

Join me, and together we can fork the problem as master and thread

Members

Alistair Godwin

Giorgi Osadze

Leonel Jara

Contents

- 1 Generic Programming

- 2 TBB Background

- 3 STL Background

- 4 A Comparison from STL

- 5 A Comparison from TBB

- 6 TBB Memory Allocation & Fixing Issues from Parallel Programming

- 7 Lock Convoying Problem

- 8 A Comparison between Serial Vector and TBB concurrent_vector

- 9 Business Point of View Comparison for STL and TBB

Generic Programming

Generic Programming is a an objective when writing code to make algorithms reusable and with the least amount of specific code. Intel describes generic programming as "writing the best possible algorithms with the least constraints". An example of generic code is STL's templating functions which provide generic code that can be used with many different types without requiring much specific coding for the type(an addition template could be used for int, double, float, short, etc without requiring re-coding). A non-generic library requires types to be specified, meaning more type-specific code has to be created.

TBB Background

Threaded building blocks is an attempt by Intel to push the development of multi-threaded programs, the library implements containers and algorithms that improve the ability of the programmer to create multi-threaded applications. The library implements parallel versions of for, reduce and scan patterns. Threaded building blocks was originally developed by Intel but the concepts that it uses are derived from a diverse range of sources. Threaded building blocks was created in 2004 and was open sourced in 2007, the latest release of Threaded building blocks was in 2017.

STL Background

The STL was created as a general purpose computation library that a focus on generic programming. The STL uses templates extensively to achieve compile time polymorphism. In general the library provide four components: algorithms, containers, functions and iterators.

The library was, mostly, created by Alexander Stepanov due to his ideas about generic programming and its potential to revolutionize software development. Because of the ability of C++ to provide access to storage using pointers, C++ was used by Stepanov, even though the language was still relatively young at the time.

After a long period of engineering and development of the library, it obtained final approval in July 1994 to become part of the language standard.

A Comparison from STL

In general, most of STL is intended for use within a serial environment. This however changes with C++17's introduction of parallel algorithms.

Algorithms Are supported by STL for various algorithms such as sorting, searching and accumulation. All can be found within the header "<algorithm>". Examples include sort() and reverse() functions.

STL iterators Are supported for serial traversal. Should you use an iterator in parallel, you must be cautious to not change the data while a thread is going through the iterator. They are defined within the header "<iterator>" and is coded as

#include<iterator>

foo(){

vector<type> myVector;

vector<type>::iterator i;

for( i = myVector.begin(); i < myVector.end(); i++){

bar();

}

}

Containers STL supports a variety of containers for data storage. Generally these containers are supported in parallel for read actions, but does not safely support writing to the container with or without reading at the same time. There are several header files that are included such as "<vector>", "<queue>", and "<deque>".Most STL containers do not support concurrent operations upon them. They are coded as:

#include<vector>

#include<dequeue>

#include<queue>

int main(){

vector<type> myVector;

dequeue<type> cards;

queue<type> SenecaYorkTimHortons;

}

Memory Allocater The use of a memory allocator allows more precise control over how dynamic memory is created and used. It is the default memory allocation method(ie not the "new" keyword) for all STL containers. The allocaters allow memory to scale rather then using delete and 'new' to manage a container's memory. They are defined within the header file "<memory>" and are coded as:

#include <memory>

void foo(){

std::allocater<type> name;

}

A Comparison from TBB

Containers

concurrent_queue : This is the concurrent version of the STL container Queue. This container supports first-in-first-out data storage like its STL counterpart. Multiple threads may simultaneously push and pop elements from the queue. Queue does NOT support and front() or back() in concurrent operations(the front could change while accessing). Also supports iterations, but are slow and are only intended for debugging a program. This is defined within the header "tbb/concurrent_queue.h" and is coded as:#include <tbb/concurrent_queue.h> //....// tbb:concurrent_queue<typename> name;concurrent_vector : This is a container class for vectors with concurrent(parallel) support. These vectors do not support erase operations but do support operations done by multiple threads such as push_back(). Note that when elements are inserted, they cannot be removed without calling the clear() member function on it, which removes every element in the array. The container when storing elements does not guarantee that elements will be stored in consecutive addresses in memory. This is defined within the header "tbb/concurrent_vector.h" and is coded as:

#include <tbb/concurrent_vector.h> //...// tbb:concurrent_vector<typename> name;

concurrent_hash_map : A container class that supports hashing in parallel. The generated keys are not ordered and there will always be at least 1 element for a key. Defined within "tbb/concurrent_hash_map.h"

Algorithms

parallel_for: Provides concurrent support for for loops. This allows data to be divided up into chunks that each thread can work on. The code is defined in "tbb/parallel_for.h" and takes the template of:

foo parallel_for(firstPos, lastPos, increment { boo()}

parallel_scan: Provides concurrent support for a parallel scan. Intel promises it may invoke the function up to 2 times the amount when compared to the serial algorithm. The code is defined in "tbb/parallel_scan.h" and according to intel takes the template of:

void parallel_scan( const Range& range, Body& body [, partitioner] );parallel_invoke: Provides support for parallel calling to functions provided in the arguments. It is defined within the header "tbb/parallel_invoke.h" and is coded as:

tbb:parallel_invoke(myFuncA, myFuncB, myFuncC);

Allocaters

Handles memory allocation for concurrent containers. In particular is used to help resolve issues that affect parallel programming. Called scalable_allocater<type> and cache_aligned_allocater<type>. Defined in "#include <tbb/scalable_allocator.h>"

TBB Memory Allocation & Fixing Issues from Parallel Programming

TBB provides memory allocation just like in STL via the std::allocater template class. Where TBB's allocater though improves, is through its expanded support for common issues experienced in parallel programming. These allocaters are called scalable_allocater<type> and cache_aligned_allocater<type> and ensure that issues like Scalability and False Sharing performance problems are reduced.

False Sharing

As you may have seen from the workshop "False Sharing" a major performance hit can occur in parallel when data that sits on the same cache line in memory is used by two threads. When threads are attempting operations on the same cache line the threads will compete for access and will move the cache line around. The time taken to move the line is a significant amount of clock cycles which causes the performance problem. Through TBB, Intel created an allocated known as cache_aligned_allocater<type>. When used, any objects with memory allocation from it will never encounter false sharing. Note that if only 1 object is allocated by this allocater, false sharing may still occur. For compatability's sake(so that programmers can simply use "find and replace"), the cache_aligned_allocater takes the same arguments as the STL allocater. If you wish to use the allocater with STL containers, you only need to set the 2nd argument as the cache_allocater object.

The following is an example provided by Intel to demonstrate this:

std::vector<int,cache_aligned_allocator<int> >;

Scaling Issue

When working in parallel, several threads may be required to access shared memory which causes a performance slow down from forcing a single thread to allocate memory while other threads are required to wait. Intel describes this issue in parallel programming as Scalability and answers the issue with scalable_allocater<type> which permits concurrent memory allocation and is considered ideal for "programs the rapidly allocate and free memory".

Lock Convoying Problem

What is a Lock?

A Lock(also called "mutex") is a method for programmers to secure code that when executing in parallel can cause multiple threads to fight for a resource/container for some operation. When threads work in parallel to complete a task with containers, there is no indication when the thread reach the container and need to perform an operation on it. This causes problems when multiple threads are accessing the same place. When doing an insertion on a container with threads, we must ensure only 1 thread is capable of pushing to it or else threads may fight for control. By "Locking" the container, we ensure only 1 thread accesses at any given time.

To use a lock, you program must be working in parallel(ex #include <thread>) and should be completing something in parallel. You can find c++11 locks with #include <mutex>

Note that there can be problems with locks. If a thread is locked but it is never unlocked, any other threads will be forced to wait which may cause performance issues. Another problem is called "Dead Locking" where each thread may be waiting for another to unlock (and vice versa) and the program is forced to wait and wait .

Parallelism Problems & Convoying in STL

Within STL, issues arise when you attempt to access containers in parallel. With containers, when threads update the container say with push back, it is difficult to determine where the insertion occurred within the container(each thread is updating this container in any order) additionally, the size of the container is unknown as each thread may be updating the size as it goes (thread A may see a size of 4 while thread B a size of 9). For example, in a vector we can push some data to it in parallel but knowing where that data was pushed to requires us to iterate through the vector for the exact location . If we attempt to find the data in parallel with other operations ongoing, 1 thread could search for the data, but another could update the vector size during that time which causes problems with thread 1's search as the memory location may change should the vector need to grow(performs a deep copy to new memory).

Locks can solve the above issue but cause significant performance issues as the threads are forced to wait for each other before continuing. This performance hit is known as Lock Convoying.

Lock Convoying in TBB

TBB attempts to mitigate the performance issue from parallel code when accessing or completing an operation on a container through its own containers such as concurrent_vector. Through concurrent_vector, every time an element is accessed/changed, a return of the index location is given. TBB promises that any time an element is pushed, it will always be in the same location, no matter if the size of the vector changes in memory. With a standard vector, when the size of the vector changes, the data is copied over. If any threads are currently traversing this vector when the size changes, any iterators may no longer be valid. This support also goes further for containers so that multiple threads can iterate through the container while another thread may be growing the container. An interesting catch though is that anything iterating may iterate over objects that are being constructed, ensuring construction and access remain synchronized.

TBB also provides its own versions of the mutex such as spin_mutex for when mutual exclusion is still required.

You can find more information on convoying and containers here: https://software.intel.com/en-us/blogs/2008/10/20/tbb-containers-vs-stl-performance-in-the-multi-core-age

A Comparison between Serial Vector and TBB concurrent_vector

If only you knew the power of the Building Blocks

Using the code below, we will test the speed at completing some operations regarding vectors using the stl library with stl's vector and tbb's concurrent_vector. The code below will perform a "push back" operation both in serial and concurrent. Then, it measure the time taken to complete an n of push back operations.

#include <iostream>

#include <tbb/tbb.h>

#include <tbb/concurrent_vector.h>

#include <vector>

#include <fstream>

#include <cstring>

#include <chrono>

#include <string>

using namespace std::chrono;

// define a stl and tbb vector

tbb::concurrent_vector<std::string> con_vector_string;

std::vector<std::string> s_vector_string;

tbb::concurrent_vector<int> con_vector_int;

std::vector<int> s_vector_int;

void reportTime(const char* msg, steady_clock::duration span) {

auto ms = duration_cast<milliseconds>(span);

std::cout << msg << " - took - " <<

ms.count() << " milliseconds" << std::endl;

}

int main(int argc, char** argv){

if(argc != 2) { return 1; }

int size = std::atoi(argv[1]);

steady_clock::time_point ts, te;

/*

TEST WITH STRING OBJECT

*/

ts = steady_clock::now();

// serial for loop

for(int i = 0; i < size; ++i)

s_vector_string.push_back(std::string());

te = steady_clock::now();

reportTime("Serial vector speed - STRING: ", te-ts);

ts = steady_clock::now();

// concurrent for loop

tbb::parallel_for(0, size, 1, [&](int i){

con_vector_string.push_back(std::string());

});

te = steady_clock::now();

reportTime("Concurrent vector speed - STRING: ", te-ts);

/*

TEST WITH INT DATA TYPE

*/

std::cout<< "\n\n";

ts = steady_clock::now();

// serial for loop

for(int i = 0; i < size; ++i)

s_vector_int.push_back(i);

te = steady_clock::now();

reportTime("Serial vector speed - INT: ", te-ts);

ts = steady_clock::now();

// concurrent for loop

tbb::parallel_for(0, size, 1, [&](int i){

con_vector_int.push_back(i);

});

te = steady_clock::now();

reportTime("Concurrent vector speed - INT: ", te-ts);

}

The Speed Improvement

As the table suggests, completing push back operations using tbb's concurrent vector allows for increased performance against a serial connection. TBB additionally provides a benefit that it will never need to resize the vector as pushback operations are completed. In STL, the vector is dynamically allocated which requires it to reallocate and copy memory over which may further slow down the push back operation.

Why was TBB slower for Int?

When dealing with primitive types like int, the actual operation to push back is not very complex; meaning that a serial process can complete the push back quite quickly. The vector can also likely hold a lot more data before requiring to reallocate its memory. TBB's practicality comes when performing a more complex action on a primitive type or from a simple action on a more complex type. Parallel overhead(the resources required to support tbb in parallel) may also increase the time required which could be the cause of the slowdown in the above comparison. TBB also provides automatic chunking and describes the benifit for use of parallel_for is 1 million clock cycles.